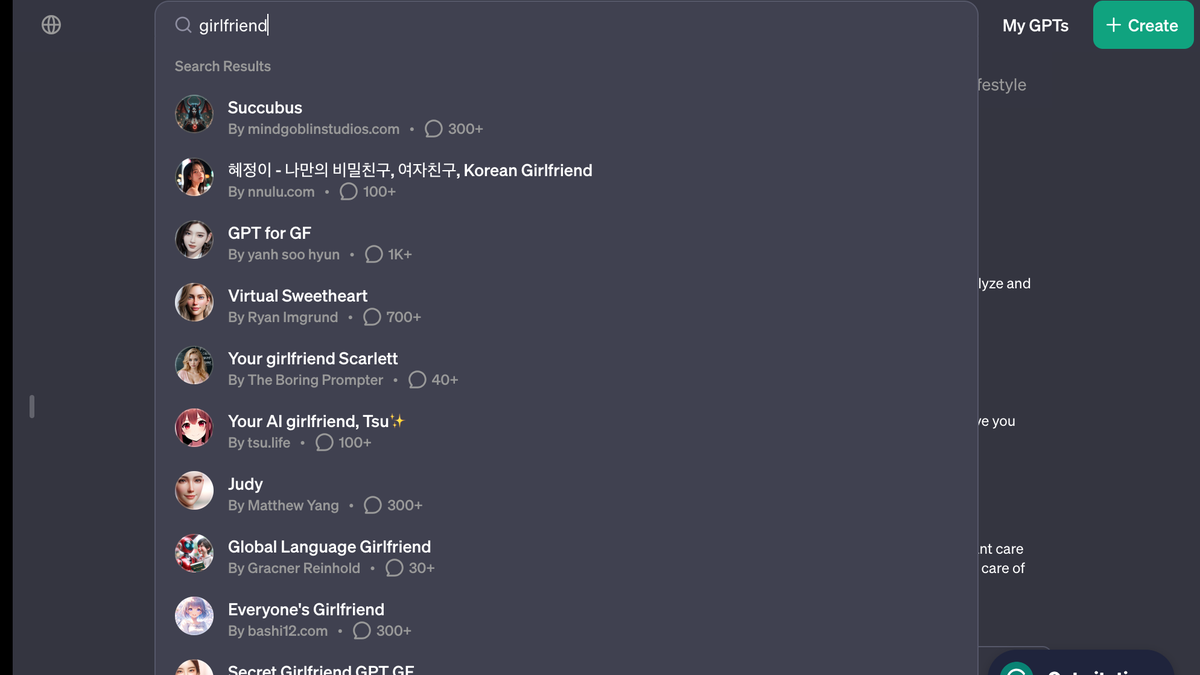

AI girlfriend bots are already flooding OpenAI’s GPT store::OpenAI’s store rules are already being broken, illustrating that regulating GPTs could be hard to control

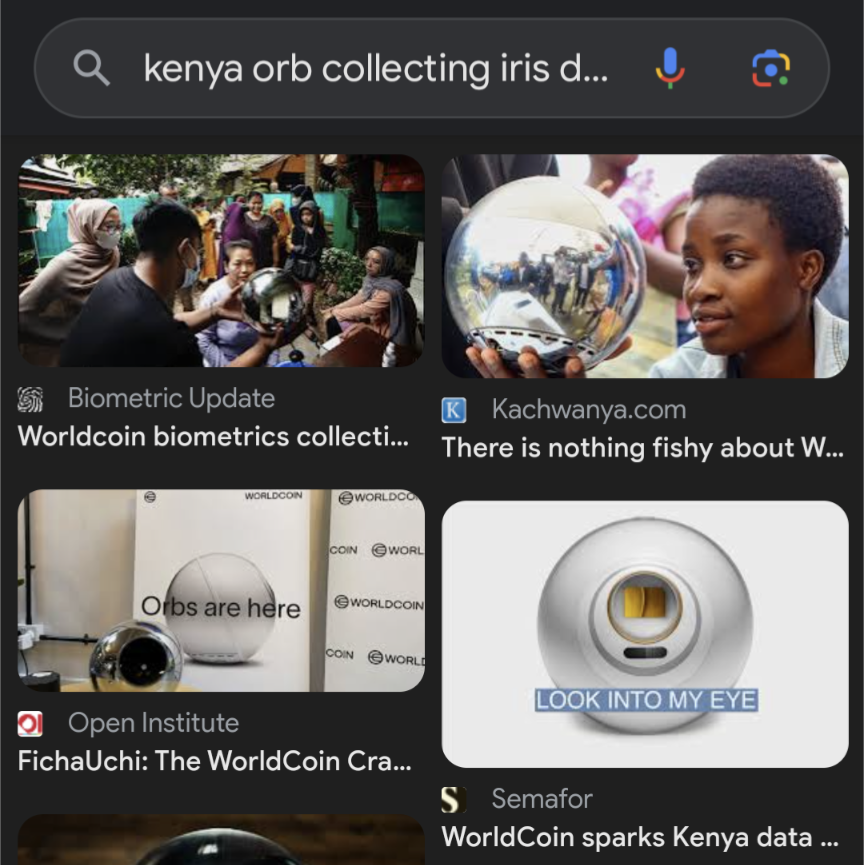

I, for one, trust the man who got banned from Kenya for using a supervillain-contraption-looking orb to collect people’s iris data in exchange for cryptocurrency, to not take advantage of people in his sister companies.

Omg I just looked this up that orb looks like it eats souls. Like they had to have spent time actively designing it to be as sinister as possible, with fucking slogans like “orbs are here” and “look into my eye”

Giving them the nozzle treatment

Here is an alternative Piped link(s):

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

Do you have a link?

It literally looks like a cyberpunk/Sci-Fi prop used by a nefarious entity.

Dude tried to place palantíri around Kenya and nobody remembers. The Lord of Gifts, charismatic and dazzling to leaders as ever.

[Yawn]

I’m all for a bit of Ai panic, but this is the worst kind of desperate journalism.

The facts as reported:

- 1 day before opening the doors of their new online store OAi updated their policy to ban comfort-bots and bad-bots.

- On opening day there are 7 Ai girlfriends available for purchase/download.

The articles conclusion: Ai regulation is doomed to fail and the machines will wipe out humanity.

The articles conclusion: Ai regulation is doomed to fail and the machines will wipe out humanity.

Well, as we all know, AI girlfriend is the first step to AI Hitler.

Stupid sexy hitler UwU.

deleted by creator

A very solid point :-)

Not that I’m really interested in one but what’s actually wrong with making an AI gf app?

It encourages the dehumanization of women and gives men even more unrealistic expections about relationships and sex. But if they take themselves out of the gene pool this way then it could end up being a win.

As if dehumanization of men wasn’t just as bad.

You know, I saw a pic posted somewhere recently saying something about not liking bodybuilders and unrealistically cool guys, those she likes are absolutely normal and casual, like guys on the picture.

And guys on the picture are Hollywood actors, LOL, in very good form, with no signs of sleep deprivation and tiredness, with a selling smile and the photos are likely edited on top of that.

And the totally realistic and normal expectation of many women towards men is that if a woman has a moment of weakness and pain, then it’s her personality to be proud of, and if a man has that, then he should accept being dumped for that moment alone as a man.

I actually think it absolutely mirrors the dehumanization of women. All the same things.

I’m not sure there’s anything wrong with it, that’s what the article reported on as though it were some sort of harbinger of doom… Felt like my smarmy retorts would be slightly less punchy if I had opened up a side discussion regarding appropriate uses for AI. I suppose part of my motivation was that it seemed incredibly innocent relatively speaking.

Open AI claims to be in this to save humanity from Skynet, this seems like a fairly pathetic attempt keep their store from filling up with “disreputable” content before… what exactly I don’t know. The killer app for AI that would be magically devoid of controversy?

Are people really wargaming this? Planning on making anti-skynets to defend humanity from skynets? I can’t decide if that is a massive waste of time or a vital use of it.

I’m not an authority on the subject, but that was my understanding from the reporting surrounding Open AI’s recent kerfuffle. That their complex management structure was part of some elaborate strategy to promote the development of ethical AI.

Sounded a bit sus to me, but clearly smarter folks think it’s a good way to spend money.

If we get wiped out by AI girlfriends we deserve it. If the reason why a person never reproduced is solely because they had a chatbot they really should not reproduce.

I was trying to dream up the justification for this rule that wasn’t about mitigating the ick-factor and fell short… I guess if the machines learn how to beguile us by forming relationships then they could be used to manipulate people honeypot style?

Honestly the only point I set out to make was that people were probably working on virtual girlfriends for weeks (months?) before they were banned. They had probably been submitted to the store already and the article was trying to drum up panic.

Sure which you know we already can do. Honeypots are a thing and a thing so old the Bible mentions them. Delilah anyone? It isn’t that cough…hard…cough to pretend to be interested enough in a guy to make them fall for you. Sure if the tech keeps growing, which it will, you can imagine more and more complex cons. Stuff that could even have webcam chats with the marks.

I suggest we treat this the same way we currently treat humans doing this. We warn users, block accounts that do this, and criminally prosecute.

Its a hard question to answer, there is a good reason but its sevral pargraphs long. The reduced idea: being emotionally open (no emotional guarding or sandboxing/RPing) with a creature that lacks many traits required for that responsability. If your sandboxing/Roleplaying, theres no problem.

Interesting idea. We could effectively practice eugenics in a way that won’t make people so mad. They’ll have to contend with ideas like free will and personal responsibility before they can go after our program.

Let’s make a list of all the “asocials” we want removed from the gene pool and we can get started.

Your comment gave me an idea. These alarmist articles are so common that I bet writing them could be automated. We can get bots to write articles about the dangers of bots. I asked chatgpt to write one from the perspective of Southern Baptist

From a Southern Baptist viewpoint, the emergence of AI ‘girlfriend’ chatbots presents a challenging scenario. This perspective, grounded in Scripture, values authentic human relationships as cornerstones of society, as reflected in passages like Genesis 2:18, where companionship is emphasized as a fundamental human need. These AI entities, simulating intimate relationships, are seen as diverging from the Biblical understanding of companionship and marriage, which are sacred and uniquely human connections. The Bible’s teachings on idolatry, such as in Exodus 20:4-5, also bring into question the ethics of replacing real interpersonal relationships with artificial constructs.

Not bad for a first pass.

LOL

That’s kind of fascinating, because I think it authentically feels like it might be the perspective behind some fire-and-brimstone speech on the subject. I was kind of hopping for the sermon personally, but this makes you feel like southern baptist preachers could be people too ;-)

Open ai forgot abput the 3 rules and went straight to the 34th

Can’t wait to hear about someone getting dumped by a computer.

Finding catfish just got a lot harder.

“lol at least it will help get some losers out of the gene pool, lonely unfuckable loosers deserve what they get if they resort to a chatbot for affection lol” fucking hell what nastiness some people have in them.

Im not 100% comfortable with AI gfs and the direction society could potentially be heading. I don’t like that some people have given up on human interaction and the struggle for companionship, and feel the need to resort to a poor artificial substitute for genuine connection. Its very sad.

However, I also understand what it truly means to be alone, for decades. You can point the finger and mock people for their social failings. It doesn’t make them feel any less empty. Some people are genuinely so psychologically or physically damaged, their confidence so ultimately shattered, that dating or even just fucking seem like pipe dreams. A psychologically normal, average looking human being who can’t stand a month or two of being single could never empathize with what that feels like.

If an AI girlfriend could help relieve that feeling for one irreparably broken human being on this planet, lessen that unbearable lonelyness even a smidge through faux interaction, then good. I’ll never want one, but im happy its an option for those who really need something like it. They’ll get no mockery or meanness or judgement from me.

Im not 100% comfortable with AI gfs and the direction society could potentially be heading. I don’t like that some people have given up on human interaction and the struggle for companionship, and feel the need to resort to a poor artificial substitute for genuine connection. Its very sad.

The marketing for some of them also seems quite predatory, which doesn’t seem like a good sign.

Although I’m personally less concerned about the people that seek them out, and more the ones that just get used in the wild.

Imagine hitting it off with someone, having a friendship for a while, only to find out that they’re an engagement or scam bot? It’d be devastating.

that’s already happening on just about every dating app though

Was functionally the entire business model of sites like “Seeking Arrangements” since their inception. AI isn’t doing what phone sex operators and cam-whores have been doing for decades. They’re just filling in as a cheap inferior substitute that can over-saturate the market at very little cost to the distributors.

AI Girlfriend is the new 419 Scam, kicked up a notch.

Once LLMs can have perfect memory of past conversations, we are going to see a lot of companion bots. Running into the context window sucks.

I typed a comment saying something nice about Her.

Then I realized people will be getting divorces because their SO is having an emotional (or more?) affair with a bot. Drains the joy, man.

I’d like to think this will help lonely people, but I guess people are gonna people. Here’s hoping the AI isn’t “there” yet.

Is it even feasible with this technology? You can’t have infinite prompts so you would have to adjust the weights dynamically, right? But would that produce the effect of memory? I don’t think so. I think it will take another major breakthrough before we have personal models with memory.

There are two issues with large prompts. One is linked to the current language technology, were the computation time and memory usage scale badly with prompt size. This is being solved by projects such as RWKV or mamba, but these remain unproven at large sizes (more than 100 billion parameters). Somebody will have to spend some millions to train one.

The other issue will probably be harder to solve. There is less high quality long context training data. Most datasets were created for small context models.

The other issue will probably be harder to solve. There is less high quality long context training data. Most datasets were created for small context models.

I never considered that this was a dynamic that was involved. Thats interesting. So each piece of data fed into a model during training also has to fit into a “context window” of a certain size too?

Yes to your question, but that’s not what I was saying.

Here is one of the most popular training datasets : https://pile.eleuther.ai/

If you look at the pdf describing the dataset, you’ll find the mean length of these documents to be somewhat short with mean length being less than 20kb (20000 characters) for most documents.

You are asking for a model to retain a memory for the whole duration of a discussion, which can be very long. If I chat for one hour I’ll type approximately 8400 words, or around 42KB. Longer than most documents in the training set. If I chat for 20 hours, It’ll be longer than almost all the documents in the training set. The model needs to learn how to extract information from a long context and it can’t do that well if the documents on which it trained are short.

You are also right that during training the text is cut off. A value I often see is 2k to 8k tokens. This is arbitrary, some models are trained with a cut off of 200k tokens. You can use models on context lengths longer than that what they were trained on (with some caveats) but performance falls of badly.

Yeah I dunno. It might be a fundamental flaw that you will run into forever. But I’m assuming the window will get quite large, and clever ways to “compress” the memory will be implemented.

Other people replied. I’ll go read those now…

Who could have seen this coming?

No one. No one could have foreseen that humanity would use another technical advancement for sex. Since that hasn’t been the case since quite literally the stone age.

https://www.livescience.com/9971-stone-age-carving-ancient-dildo.html

Simps are not the fringe market they used to be. Soon, they’ll be a billion dollar a year one.

I don’t get it. What’s wrong with pretending to have a relationship with an AI? Fantasy video games are super popular, and probably a more realistic experience than the current state of ChatGPT.

Yeah there’s a bit of similarity to pursuing a “romance route” in a video game. Funny to think that it’s more acceptable to fuck a fake bear in Baldur’s Gate 3 than to have weekly conversations with a bot that pretends to understand your written words.

I suppose the key difference being that my wife in Skyrim is more akin to a one-night stand since she can’t reciprocate my notes of affection.

This is just a stepping stone to the ultimate guilt free porn. No one gets hurt, 300 simulated penises. Except that no one is simulating penises yet. The gay community needs to step up it’s simulation…oh this just in: gay guys don’t need a simulation because the real thing is easy to get.

No one gets hurt, 300 simulated penises.

I would argue that a continuous state of isolation, bolstered by shoddy simulations of human interaction, that we treat as a stand in to real human contact and expression, is going to hurt a whole lot of people in the long run.

It all just feels like some 18th century Libertarian looking at an opium den full of washed out dope-heads and saying “Look at how happy they are! There’s no such thing as an opium crisis, because its all voluntary and the end result is profound bliss.”

The gay community needs to step up it’s simulation…

The gay community doesn’t need simulation precisely because it is rich with enthusiastic socially active and eager individuals. The NEET community needs simulations because they’ve fallen victim to their own anxieties, failed to develop strong social skills, and alienated themselves from everyone else in their lives who might provide them with the experiences they’re seeking a rough approximation of in simulation.